Lecture 19 | Different Classification Models

Lecture 19 | Different Classification Models

|

|

Draw the following data set:

Business

Competition

Value

Profit

Freelancing

Low

Medium

Yes

Ecommerce

Medium

Hight

No

Freelancing

Hight

Medium

No

Ecommerce

Medium

Low

Yes

Whole Sale

Hight

Medium

No

Whole Sale

Low

Hight

Yes

· Tree has nodes, branches and leaves.

· We have to decide the root node

|

|

Following are the three concepts in

machine learning.

· Entropy. Entropy is the measure of impurity. In measure of entropy,

if the impurity is more the entropy is also high and vice versa. When we

classify an attribute, for example while performing binary classification, like

in profit attribute the values would be Yes or No, it means we have removed the

impurity as we have two values.

· Let us understand the concept with the help of entropy. Suppose we

have three baskets:

One basked has equal quantities of both eggs and candies.

Second basket has more eggs than candies.

Third basket has only eggs.

· Our target are eggs. In first basket we have to find out how much

impurity is there in that basket. We will see it may be random as per ration or

percentage between eggs and candies.

· Similarly in second basket impurity is less than the first and in

the last basket the impurity is very low.

Now we draw the decision tree with the help of data assumed above.

Remember every node in the tree is a decision that’s why it is called decision

tree. Suppose we have four attributes or feature and fifth is target attribute

or class label and we are using binary classification. When we arrive at the

last node or leaf node, all classes in the attributes would have been

separated. We would have only one class remaining at this stage.

|

|

· Our goal is to find the entropy from each of four attributes. Our

purpose to find such attribute which have more distinct classes in it. Decision

tree will help us to reaching at this stage where we would have very clear

distinct classes.

· First of all we are going to choose that attribute which reduces

the impurity from its data more quickly than others. For example in order to

purify the milk we have to extract water from it.

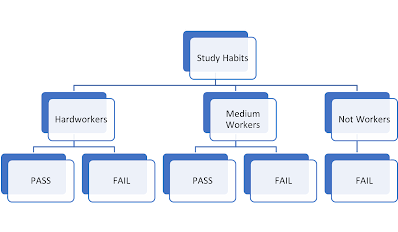

· Another example to arrive the first root node, we take example of

classifying the students into who qualify or who could not qualify. We have

taken the following attributes:

o

Students

who do the hard work.

o

Students

who do the medium work

o

Students

who don’t pay much attention to the studies.

o

Students

who have the very good attendance

o

Students

who have average attendance

o

Students

who have poor attendance.

o

Students

who have the very active participation

o

Students

who have moderate participation

o

Students

who have poor participation.

Now question arises why we use the decision tree, answer is it

reduces the lot of computational power in machine learning. Another feature of

decision tree is that it can read both categorical and numerical data.

|

|

|

|

|

|

Forest comprises many trees. In Random Forest we take more than one

decision tree. Suppose we had seven different attributes on the basis of which

we were going to form the decision tree. In each decision tree we are going to form we will

add five attributes instead of seven random attributes in order to avoid the

recurrence in the data. In each tree root node would be different as each root

note would have different entropy levels. Some trees out of five trees would

have root node of high entropy and other would have low entropy root nodes. On

the basis of it, all five trees would have different features. So in random

forest have many trees which have subset of different features.

Suppose we have to make decision whether a student would fail or

pas on the basis of following study habits :

Study Habits- Medium

Attendance- Regular

|

|

|

|

|

|

Random Forest is one of the most popular and commonly used

algorithms by Data Scientists. Random forest is a Supervised Machine Learning Algorithm that is used widely in Classification and Regression

problems. It builds decision trees on different samples and

takes their majority vote for classification and average in case of regression.

Random forest is a versatile machine learning algorithm

developed by Leo Breiman and Adele Cutler. It leverages an ensemble of multiple

decision trees to generate predictions or classifications. By combining the

outputs of these trees, the random forest algorithm delivers a consolidated and

more accurate result.

Its widespread popularity stems from its user-friendly nature

and adaptability, enabling it to tackle both classification and regression

problems effectively. The algorithm’s strength lies in its ability to handle

complex datasets and mitigate overfitting, making it a valuable tool for

various predictive tasks in machine learning.

One of the most important features of the Random Forest

Algorithm is that it can handle the data set containing continuous

variables, as in the case of regression, and categorical

variables, as in the case of classification. It performs

better for classification and regression tasks. In this tutorial, we will

understand the working of random forest and implement random forest on a

classification task.

Learning Objectives

- Learn the

working of random forest with an example

- Understand

the impact of different hyperparameters in random forest

- Implement

Random Forest on a classification problem using scikit-learn

Become a Full Stack Data Scientist

Real-Life Analogy of Random Forest

Let’s dive into a real-life analogy to understand this concept

further. A student named X wants to choose a course after his 10+2, and he is

confused about the choice of course based on his skill set. So he decides to

consult various people like his cousins, teachers, parents, degree students,

and working people. He asks them varied questions like why he should choose,

job opportunities with that course, course fee, etc. Finally, after consulting

various people about the course he decides to take the course suggested by most

people.

Working of Random Forest Algorithm

Before understanding the working of the random forest algorithm

in machine learning, we must look into the ensemble learning technique. Ensemble simplymeans

combining multiple models. Thus a collection of models is used to make

predictions rather than an individual model.

Ensemble uses two types of methods:

1. Bagging– It creates a different

training subset from sample training data with replacement & the final

output is based on majority voting. For example, Random Forest.

2. Boosting– It combines weak

learners into strong learners by creating sequential models such that the final

model has the highest accuracy. For example, ADA BOOST, XG BOOST.

As mentioned earlier, Random forest works on the Bagging principle. Now let’s dive in and understand bagging in detail.

Bagging

Bagging, also known as Bootstrap Aggregation, is

the ensemble technique used by random forest.Bagging chooses a random

sample/random subset from the entire data set. Hence each model is generated

from the samples (Bootstrap Samples) provided by the Original Data with

replacement known as row sampling. This step of row

sampling with replacement is called bootstrap. Now each model

is trained independently, which generates results. The final output is based on

majority voting after combining the results of all models. This step which

involves combining all the results and generating output based on majority

voting, is known as aggregation.

Now let’s look at an example by breaking it down with the help of the following figure. Here the bootstrap sample is taken from actual data (Bootstrap sample 01, Bootstrap sample 02, and Bootstrap sample 03) with a replacement which means there is a high possibility that each sample won’t contain unique data. The model (Model 01, Model 02, and Model 03) obtained from this bootstrap sample is trained independently. Each model generates results as shown. Now the Happy emoji has a majority when compared to the Sad emoji. Thus based on majority voting final output is obtained as Happy emoji.

Boosting

Boosting is one of the techniques that use the concept of

ensemble learning. A boosting algorithm combines multiple simple models (also

known as weak learners or base estimators) to generate the final output. It is

done by building a model by using weak models in series.

There are several boosting algorithms; AdaBoost was the first

really successful boosting algorithm that was developed for the purpose of

binary classification. AdaBoost is an abbreviation for Adaptive Boosting and is

a prevalent boosting technique that combines multiple “weak classifiers” into a

single “strong classifier.” There are Other Boosting techniques. For more, you

can visit

Steps Involved in Random Forest Algorithm

Step 1: In the Random forest model, a

subset of data points and a subset of features is selected for constructing

each decision tree. Simply put, n random records and m features are taken from

the data set having k number of records.

Step 2: Individual decision trees are

constructed for each sample.

Step 3: Each decision tree will

generate an output.

Step 4: Final output is considered

based on Majority Voting or Averaging for

Classification and regression, respectively.

For example: consider the fruit basket as the data as

shown in the figure below. Now n number of samples are taken from the fruit

basket, and an individual decision tree is constructed for each sample. Each

decision tree will generate an output, as shown in the figure. The final output

is considered based on majority voting. In the below figure, you can see that

the majority decision tree gives output as an apple when compared to a banana,

so the final output is taken as an apple.

Important Features of Random Forest

- Diversity: Not all attributes/variables/features are

considered while making an individual tree; each tree is different.

- Immune to the curse of dimensionality: Since each tree does not consider

all the features, the feature space is reduced.

- Parallelization: Each tree is created independently out of

different data and attributes. This means we can fully use the CPU to

build random forests.

- Train-Test split: In a random forest, we don’t have to

segregate the data for train and test as there will always be 30% of the

data which is not seen by the decision tree.

- Stability: Stability arises because the result is

based on majority voting/ averaging.

Difference Between Decision Tree and Random

Forest

Random forest is a collection of decision trees; still, there

are a lot of differences in their behavior.

|

Decision

trees |

Random

Forest |

|

1.

Decision trees normally suffer from the problem of overfitting if it’s

allowed to grow without any control. |

1.

Random forests are created from subsets of data, and the final output is

based on average or majority ranking; hence the problem of overfitting is

taken care of. |

|

2.

A single decision tree is faster in computation. |

2.

It is comparatively slower. |

|

3.

When a data set with features is taken as input by a decision tree, it will

formulate some rules to make predictions. |

3.

Random forest randomly selects observations, builds a decision tree, and

takes the average result. It doesn’t use any set of formulas. |

Thus random forests are much more successful than decision trees

only if the trees are diverse and acceptable.

Important Hyperparameters in Random Forest

Hyperparameters are used in random forests to either enhance the

performance and predictive power of models or to make the model faster.

Hyperparameters to Increase the Predictive Power

n_estimators: Number of trees the

algorithm builds before averaging the predictions.

max_features: Maximum number of features

random forest considers splitting a node.

mini_sample_leaf: Determines the minimum number of leaves required to split

an internal node.

criterion: How to split the node in

each tree? (Entropy/Gini impurity/Log Loss)

max_leaf_nodes: Maximum leaf nodes in each tree

Hyperparameters to Increase the Speed

n_jobs: it tells the engine how many

processors it is allowed to use. If the value is 1, it can use only one

processor, but if the value is -1, there is no limit.

random_state: controls randomness of the sample. The model will always produce

the same results if it has a definite value of random state and has been given

the same hyperparameters and training data.

oob_score: OOB means out of the bag. It is a random forest

cross-validation method. In this, one-third of the sample is not used to train

the data; instead used to evaluate its performance. These samples are called

out-of-bag samples.

Coding in Python – Random Forest

Now let’s implement Random Forest in scikit-learn.

1. Let’s import the libraries.

# Importing the required librariesimport pandas as pd, numpy as npimport matplotlib.pyplot as plt, seaborn as sns%matplotlib inline

2. Import the dataset.

Python Code:

3. Putting Feature Variable to X and Target variable

to y.

# Putting feature variable to XX = df.drop('heart disease',axis=1)# Putting response variable to yy = df['heart disease']

4. Train-Test-Split is performed

# now lets split the data into train and testfrom sklearn.model_selection import train_test_split# Splitting the data into train and testX_train, X_test, y_train, y_test = train_test_split(X, y, train_size=0.7, random_state=42)X_train.shape, X_test.shape

5. Let’s import RandomForestClassifier and fit the

data.

from sklearn.ensemble import RandomForestClassifierclassifier_rf = RandomForestClassifier(random_state=42, n_jobs=-1, max_depth=5, n_estimators=100, oob_score=True)%%timeclassifier_rf.fit(X_train, y_train)

# checking the oob scoreclassifier_rf.oob_score_

6. Let’s do hyperparameter tuning for Random Forest

using GridSearchCV and fit the data.

rf = RandomForestClassifier(random_state=42, n_jobs=-1)params = { 'max_depth': [2,3,5,10,20], 'min_samples_leaf': [5,10,20,50,100,200], 'n_estimators': [10,25,30,50,100,200]}from sklearn.model_selection import GridSearchCV# Instantiate the grid search modelgrid_search = GridSearchCV(estimator=rf, param_grid=params, cv = 4, n_jobs=-1, verbose=1, scoring="accuracy")%%timegrid_search.fit(X_train, y_train)

grid_search.best_score_

rf_best = grid_search.best_estimator_rf_best

From hyperparameter tuning, we can fetch the best estimator, as

shown. The best set of parameters identified was max_depth=5,

min_samples_leaf=10,n_estimators=10

7. Now, let’s visualize

from sklearn.tree import plot_treeplt.figure(figsize=(80,40))plot_tree(rf_best.estimators_[5], feature_names = X.columns,class_names=['Disease', "No Disease"],filled=True);

from sklearn.tree import plot_treeplt.figure(figsize=(80,40))plot_tree(rf_best.estimators_[7], feature_names = X.columns,class_names=['Disease', "No Disease"],filled=True);

The trees created by estimators_[5] and estimators_[7] are

different. Thus we can say that each tree is independent of the other.

8. Now let’s sort the data with the help of feature

importance

rf_best.feature_importances_

imp_df = pd.DataFrame({ "Varname": X_train.columns, "Imp": rf_best.feature_importances_})imp_df.sort_values(by="Imp", ascending=False)

Random Forest Algorithm Use Cases

This algorithm is widely used in E-commerce, banking, medicine,

the stock market, etc.

For example: In the Banking industry, it can be used to find

which customer will default on a loan.

Advantages and Disadvantages of Random Forest

Algorithm

Advantages

1. It can be used in classification and regression problems.

2. It solves the problem of overfitting as output is based

on majority voting or averaging.

3. It performs well even if the data contains null/missing

values.

4. Each decision tree created is independent of the other; thus,

it shows the property of parallelization.

5. It is highly stable as the average answers given by a large

number of trees are taken.

6. It maintains diversity as all the attributes are not

considered while making each decision tree though it is not true in all cases.

7. It is immune to the curse of dimensionality. Since each tree

does not consider all the attributes, feature space is reduced.

8. We don’t have to segregate data into train and test as there

will always be 30% of the data, which is not seen by the decision tree made out

of bootstrap.

Disadvantages

1. Random forest is highly complex compared to decision trees,

where decisions can be made by following the path of the tree.

2. Training time is more than other models due to its

complexity. Whenever it has to make a prediction, each decision tree has to

generate output for the given input data.

Conclusion

Random forest is a great choice if anyone wants to build the

model fast and efficiently, as one of the best things about the random forest

is it can handle missing values. It is one of the best techniques with high

performance, widely used in various industries for its efficiency. It can

handle binary, continuous, and categorical data. Overall, random forest is a

fast, simple, flexible, and robust model with some limitations.

Key Takeaways

- Random

forest algorithm is an ensemble learning technique combining numerous

classifiers to enhance a model’s performance.

- Random

Forest is a supervised machine-learning algorithm made up of decision

trees.

- Random

Forest is used for both classification and regression problems.

Frequently Asked Questions

Q1. How do you explain a random

forest?

A. Random Forest is a supervised

learning algorithm that works on the concept of bagging. In bagging, a group of

models is trained on different subsets of the dataset, and the final output is

generated by collating the outputs of all the different models. In the case of

random forest, the base model is a decision tree.

Q2. How random forest works step

by step?

A. The following steps will tell

you how random forest works:

1. Create Bootstrap Samples:

Construct different samples of the dataset with replacements by randomly

selecting the rows and columns from the dataset. These are known as bootstrap

samples.

2. Build Decision Trees: Construct

the decision tree on each bootstrap sample as per the hyperparameters.

3. Generate Final Output: Combine

the output of all the decision trees to generate the final output.

Q3. What are the advantages of

Random Forest?

A. Random Forest tends to have a

low bias since it works on the concept of bagging. It works well even with a

dataset with a large no. of features since it works on a subset of features.

Moreover, it is faster to train as the trees are independent of each other,

making the training process parallelizable.

Q4. Why do we use random forest

algorithms?

A. Random Forest is a popular

machine learning algorithm used for classification and regression tasks due to

its high accuracy, robustness, feature importance, versatility, and

scalability. Random Forest reduces overfitting by averaging multiple decision

trees and is less sensitive to noise and outliers in the data. It provides a

measure of feature importance, which can be useful for feature selection and

data interpretation.

The media shown in this article are not owned by Analytics

Vidhya and are used at the Author’s discretion.

Comments

Post a Comment